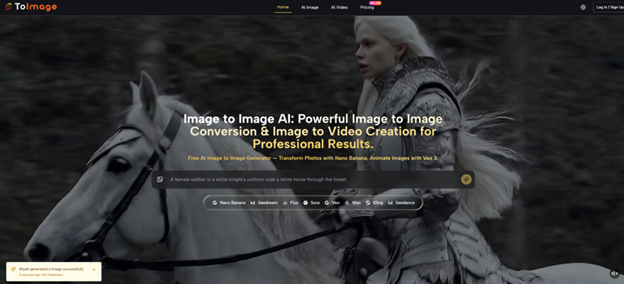

Most people first discover AI image tools through text prompts. Type a few words, wait a few seconds, and a brand-new picture appears. It feels exciting at first. But after the novelty fades, a more practical problem shows up: starting from zero every time is often inefficient.

That is exactly why Image to Image is becoming a more useful workflow for creators, marketers, designers, and everyday users. Instead of asking AI to guess your vision from scratch, you begin with a real image and guide it toward a better result. That single shift makes the process feel less random, more controllable, and much closer to how visual work actually happens in the real world.

Why Starting From an Existing Image Changes Everything

Blank-canvas generation sounds powerful, but it often creates unnecessary friction. You spend time trying to describe things that are already obvious in your mind: the angle, the lighting, the pose, the product shape, the facial structure, or the overall mood. Even a strong prompt can still send the output in the wrong direction.

Image-to-image works differently. It gives the model a visual anchor. That means the AI is not inventing everything from nothing. It is responding to something concrete and transforming it.

This makes a huge difference in practice. When you already have a product shot, a portrait, a sketch, or a reference composition, the goal usually is not “create anything.” The goal is “keep the essence, but improve or restyle it.” That is a far more common creative task than people realize.

How the Workflow Feels in Real Use

A good image-to-image workflow is less about magical prompts and more about controlled evolution.

You begin with an image that already contains something valuable. Maybe it is the subject. Maybe it is the framing. Maybe it is the brand tone. Then you use AI to change what needs changing without throwing away what already works.

That makes the process feel more stable. Instead of chasing the same idea repeatedly through prompt variations, you can push one strong base image into different directions. You can make it more polished, more stylized, more cinematic, more commercial, or more expressive.

In other words, image-to-image is not just another generation mode. It is often the mode that turns AI image tools into something genuinely practical.

10 AI tools to help you learn English faster (and actually remember it)

Why It Fits Real Creative Work Better

There is a reason this approach feels more natural. Most visual projects do not begin from emptiness.

A brand already has product photos. A creator already has a portrait. A designer already has a draft. A small business already has rough marketing assets. A content team already has a visual concept that needs more range, not total reinvention.

That is where image-to-image becomes useful in a professional sense.

It preserves direction

When the source image already carries the right structure or mood, the AI has less room to drift into irrelevant territory.

It speeds up iteration

One image can become many versions. You can test multiple aesthetics, campaign directions, or visual moods without rebuilding the same concept from the ground up.

It reduces prompt burden

You still need good instructions, but you no longer have to over-explain every visible detail. The image already communicates part of the intent.

It helps consistency

If your work depends on keeping a subject, style, or visual identity recognizable across outputs, starting from references is usually far more dependable than prompting from scratch every time.

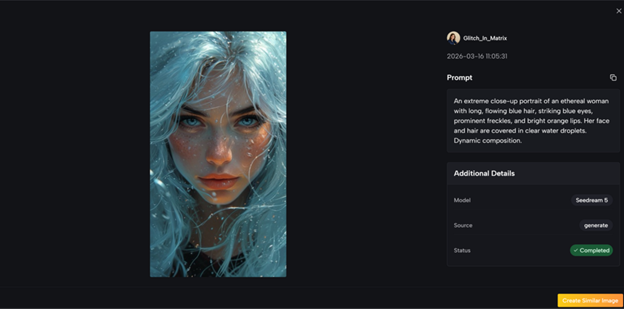

What Makes a Multi-Model Approach More Interesting

One thing that makes this kind of platform more compelling is that it does not treat all image tasks as identical.

That matters because not every creative job needs the same kind of AI behavior.

Some tasks need realism. Others need speed. Some need broader visual transformation, while others need tighter control over specific edits. A platform that gives users different model options is not just offering variety for the sake of it. It is acknowledging that image creation is not one single workflow.

That becomes especially important once you move beyond casual experimentation. In real use, people care about trade-offs. They want to know when to prioritize speed, when to prioritize quality, and when to prioritize edit precision. A multi-model setup makes that possible.

A Better Way to Think About Image to Image

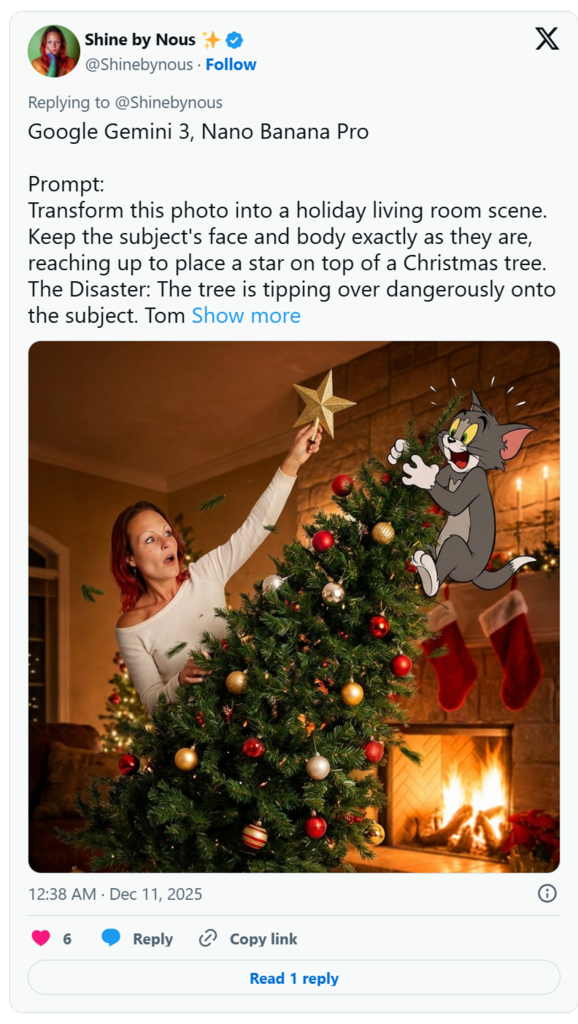

A lot of people still think of image-to-image as just a style transfer tool. That is too narrow.

- It is better understood as a guided visual transformation workflow.

- Sometimes that means changing a photo into an illustration.

- Sometimes it means upgrading a rough visual into something more polished.

- Sometimes it means keeping the character but changing the environment, mood, or aesthetic.

- Sometimes it means preserving the composition while rewriting the visual language around it.

That broader view is what makes image-to-image so useful. It is not only about effects. It is about direction, control, and efficiency.

Who This Is Actually Good For

This workflow is especially strong for people who already have some kind of visual starting point.

Creators and influencers

They can turn one successful image into multiple looks for different platforms and posting styles.

E-commerce and marketing teams

They can begin with simple product photos and expand them into more polished promotional visuals without organizing full new shoots every time. A reliable photo editor can help streamline this process and enhance the final results efficiently.

Designers and indie builders

They can use existing mockups, drafts, or references as foundations and then refine them faster.

Character-based projects

Any workflow that depends on visual continuity benefits from stronger reference-driven generation instead of full prompt randomness.

What Good Results Still Depend On

Image-to-image can feel easier than text-to-image, but it is not effortless.

The quality of the starting image still matters. A clear, readable source usually gives the model something better to work with.

The prompt still matters too. Even when the AI sees your image, it still needs direction. You have to communicate what should remain stable and what should change.

And judgment matters most of all. AI can generate many attractive options, but not every attractive result is strategically useful. The human role is still to decide what actually fits the project.

That is why the best results usually come from iteration, not from a single perfect attempt. First get the direction right. Then refine. Then polish.

Why This Workflow Will Probably Matter Even More Over Time

As AI image tools mature, the most valuable experiences will not just be about raw generation power. They will be about control.

People do not only want more images. They want better ways to shape images. They want workflows that feel closer to editing, art direction, and creative decision-making. That is exactly where image-to-image stands out.

It matches how visual work really happens. Most projects are not born from nothing. They begin with something unfinished and move toward something better.

That is why image-to-image feels less like a gimmick and more like a lasting creative workflow. It helps people build from what they already have, keep what matters, and move faster toward results that actually feel usable.

One thought on “Why Image to Image Is Becoming the Smarter Way to Create AI Visuals”

Pingback: Chrome Hearts x Parke Sweatshirt – Gothic Meets Minimal Comfort - Learn Laugh Speak