Security teams often believe they understand their exposure. They run vulnerability scans. They commission penetration tests. They tick compliance boxes. On paper, the risk looks contained.

Then a red team engagement tells a different story.

The difference is not technical depth alone. It is intent. A red team does not look for flaws in isolation. It looks for ways in. It thinks about access, movement, timing, human weakness, blind spots in monitoring. It behaves like a patient adversary, not an auditor.

That shift in mindset exposes gaps most organisations do not realise they carry.

What a Red Team Engagement Really Tests

A conventional penetration test examines systems within defined scope and time. It identifies exploitable weaknesses and reports them. Useful, certainly. But contained.

A red team engagement examines how an attacker could realistically compromise the organisation and achieve a specific objective. That objective might be domain dominance, data exfiltration, or access to a sensitive application. The focus sits on impact rather than enumeration.

This distinction matters.

The red team does not simply scan externally and stop. It chains weaknesses together. A forgotten subdomain. An exposed service. A user who clicks. A misconfigured identity control. Individually, none of these may appear catastrophic. Combined, they form a viable path.

Consider the breach at Target in 2013. The initial access came through a third-party HVAC vendor. The vulnerability was not exotic. The real failure sat in segmentation and detection. An attacker moved from a supplier portal to internal systems holding payment card data. A red team engagement models precisely this kind of chained compromise.

It answers a harder question: if someone genuinely wanted to breach the organisation, how far would they get?

The Quiet Value of Adversarial Thinking

There is something uncomfortable about watching a red team operate. Security teams often discover that controls they believed effective are either misconfigured or not monitored properly.

Logging exists, but no one reviews it in real time. Alerts trigger, yet analysts dismiss them as noise. Privileged accounts remain active long after projects end. These are not technical failures. They are operational ones.

A mature red team engagement does not only highlight technical exposure. It measures detection and response. It tests whether the blue team can identify lateral movement. Whether endpoint tools notice credential dumping. Whether unusual authentication patterns raise suspicion.

When the exercise finishes, the most valuable output is rarely the list of vulnerabilities. It is the evidence of how the organisation responded while under pressure.

That feedback cannot be simulated through documentation reviews.

How a Red Team Engagement Typically Unfolds

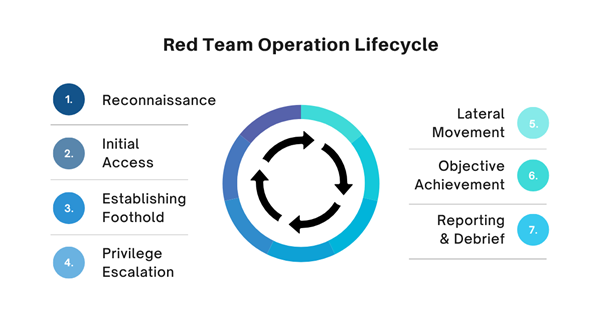

Every engagement differs in scope and ambition. Yet most follow a loose structure, even if it appears fluid from the outside.

Before looking at the mechanics, it helps to see the flow as a progression rather than isolated tasks.

1. Reconnaissance

The red team gathers intelligence. Open-source data, leaked credentials, domain information, supplier relationships. No alerts triggered yet. Quiet observation.

2. Initial Access

Access may come through phishing, exposed services, stolen credentials, or physical entry in some cases. The method matters less than the realism.

3. Establishing Foothold

Persistence mechanisms are deployed. Web shells, scheduled tasks, credential reuse. The aim is stability without detection.

4. Privilege Escalation

Local administrator access often leads to domain level compromise. Misconfigurations in identity and access management commonly appear here.

5. Lateral Movement

Movement across endpoints and servers tests network segmentation and monitoring capability.

6. Objective Achievement

Data extraction, ransomware simulation, or control over critical infrastructure. The predefined goal is reached, if possible.

7. Reporting and Debrief

The final stage analyses what happened, how it was detected, and what failed silently.

On paper this looks orderly. In practice, it feels messy. Some stages collapse into one another. A single phishing email may shortcut several steps. Or an overlooked misconfiguration might provide immediate privilege escalation.

That unpredictability is the point.

Why Organisations Hesitate

There is often reluctance before commissioning a red team engagement. Some executives fear disruption. Others worry about reputational damage if findings leak. Security leaders sometimes fear exposure of weaknesses they suspect but cannot prove.

The reality is simpler. The weaknesses exist whether tested or not.

A red team exercise conducted with proper governance does not create risk. It reveals it in a controlled environment. The alternative is waiting for a criminal group to perform the same test without permission.

Look at the ransomware incidents affecting NHS organisations during the WannaCry outbreak. The vulnerability exploited was known and patchable. Yet segmentation, patch management, and monitoring failures allowed rapid spread. A realistic adversarial simulation could have highlighted that exposure earlier.

Security posture improves when assumptions are challenged. Not when they are protected.

Measuring What Matters

Traditional security metrics often focus on volume. Number of vulnerabilities. Patch timelines. Alert counts. These metrics show activity, not resilience.

A red team engagement produces different measures. Time to detect. Time to contain. Depth of compromise before response. Communication flow between technical and executive teams.

These are harder to quantify neatly. They also matter far more during a real incident.

An organisation may patch critical systems within days and still fail to detect active credential abuse for months. Both statements can be true simultaneously.

That tension becomes visible under adversarial pressure.

The Human Factor

Technology rarely collapses in isolation. Human behaviour amplifies weakness.

Phishing remains effective because it targets routine. A finance employee processes hundreds of invoices. A convincing email slips through. Multi factor authentication may exist, yet fatigue prompts approval of a malicious request.

A red team engagement tests these human seams. It examines how staff react to subtle manipulation. Whether policies are understood or merely documented.

It also tests executive response. When informed of suspicious activity, do leaders escalate quickly? Or does internal politics slow containment?

These softer dynamics often define breach impact more than exploit complexity.

Avoiding the Theatre of Security

There is a danger that red team exercises become performative. A single annual engagement, a dramatic presentation, then silence for twelve months. Risk drifts back into daily routine.

Real value emerges when findings feed into sustained improvement. Controls adjusted. Monitoring refined. Response playbooks rehearsed.

A red team engagement should not exist to prove competence. It should exist to stress it.

Over time, organisations that repeat adversarial simulations notice something subtle. The gap between breach attempt and detection narrows. The blue team grows sharper. Escalation paths become cleaner.

That maturity does not arrive from documentation alone.

When is the Right Time?

There is no perfect moment. However, certain conditions make a red team engagement particularly useful.

After significant infrastructure change. Following mergers or acquisitions. When migrating to cloud environments. Or when leadership seeks assurance beyond compliance reports.

It also becomes relevant when the organisation suspects that defences appear strong on paper yet lack real validation.

Security investment without testing remains theoretical. Testing without realistic pressure remains incomplete.

The purpose is not to induce fear. It is to remove illusion.

Conclusion

A red team engagement strips away assumptions. It exposes how systems, people and processes behave under realistic adversarial pressure. It reveals the space between documented control and operational reality.

Organisations that embrace this scrutiny gain clarity. They understand not only where vulnerabilities exist, but how an attacker would exploit them and how quickly they would notice.

If you’re looking for professional and expert-led red teaming services, CyberNX can help you out here. They us cutting-edge tools, offensive security tactics and highly advanced methods to find and fix security holes.

The objective is simple. Identify real risk before someone else does and strengthen response before it is tested for real.

Security does not fail because of unknown threats alone. It fails when known weaknesses remain unchallenged.