Static images often fail to capture the dynamic energy of a real-life moment, leaving creators with a library of beautiful but silent fragments. When you look at a landscape or a family portrait, you remember the wind in the trees or the subtle smile that preceded the shot, yet the file itself remains frozen. This disconnect can make digital archives feel stagnant and less engaging for modern audiences who crave movement. By integrating Image to Video AI into your creative toolkit, you can bridge this gap and transform any high-quality photograph into a cinematic five-second sequence that tells a much richer story.

The rise of short-form video has placed immense pressure on individuals and businesses to produce high-quality motion content constantly. However, the traditional barriers to entry—expensive equipment, steep learning curves for editing software, and the time required for manual animation—remain prohibitively high for many. This is where generative technology steps in to democratize the creative process. By using advanced algorithms to predict and render motion, it is now possible to turn a single “moment in time” into a living experience that resonates with viewers on a deeper emotional level than a standard photo ever could.

Innovative Neural Models Powering the Future of Digital Storytelling

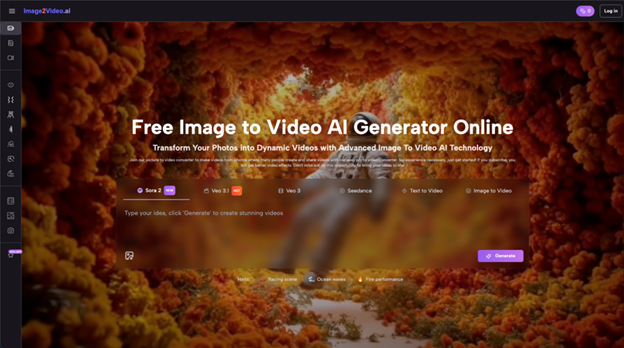

The technical foundation of this platform relies on a sophisticated ensemble of AI models that work together to interpret visual data. Instead of simply applying a filter, the system analyzes the composition of your photo to understand which elements should remain fixed and which should move. Based on my observations, models like Sora 2 and Veo 3.1 are exceptionally skilled at maintaining the structural integrity of a subject while adding fluid, realistic motion to the surrounding environment.

In my testing, I have noticed that the system does more than just move pixels; it creates a sense of physical weight and atmosphere. For example, if you upload a photo of a rainy street, the AI can simulate the way light reflects off wet pavement or how raindrops hit the ground. This level of detail is what separates a simple “moving picture” from a professional-grade video. By leveraging these high-performance models, users can achieve a level of cinematic quality that was previously only available to professional studios with massive budgets.

Analyzing How Artificial Intelligence Interprets Depth and Environmental Light

One of the most impressive feats of modern AI is its ability to perceive depth in a two-dimensional image. When you provide a photograph, the neural network builds a mental map of the scene, identifying what is in the foreground and what lies in the distance. This allows the AI to create “parallax” effects, where the background moves at a different speed than the subject, mimicking the way a real camera lens captures a scene.

This depth perception is crucial for creating realistic lighting changes. If a subject moves forward in a generated video, the AI must recalculate how the light hits their face or how shadows are cast on the floor. In my experience, the platform handles these transitions with a surprising level of smoothness. This capability ensures that the final video does not look like a flat cutout moving across a screen, but rather like a three-dimensional world that has been briefly brought to life.

Simulating Natural Motion in Human Subjects and Scenic Landscapes

Capturing human movement is notoriously difficult for AI, as our brains are highly sensitive to “uncanny” or unnatural gestures. However, specialized modules within the platform, such as those designed for AI Hugs or AI Dancing, focus specifically on the nuances of human interaction. These tools are programmed with a deep understanding of human anatomy and physics, allowing them to generate movements that feel intentional and emotionally resonant.

When it comes to landscapes, the challenge is different. The AI must simulate the chaotic yet rhythmic patterns of nature, such as the swaying of grass or the flow of a river. Based on my personal observations, the platform excels at these environmental simulations, providing a relaxing and immersive quality to scenic shots. This makes it an ideal tool for travel bloggers or nature photographers who want to give their audience a more sensory experience of the locations they visit.

A Four Step Workflow for Effortless Professional Video Production

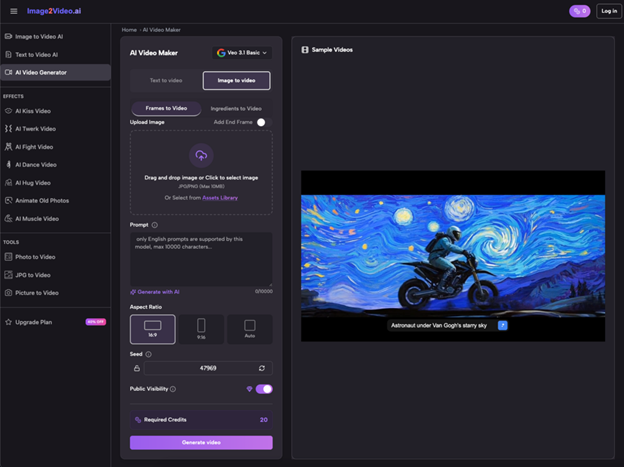

The platform is designed to be accessible to everyone, regardless of their technical background. By following a streamlined official process, users can generate high-quality videos without having to manage complex settings or local hardware configurations.

- Upload the Source Image: The process begins by selecting a high-resolution JPEG or PNG file. Starting with a clear, well-composed image ensures that the AI has a strong foundation to build upon.

- Provide Movement Instructions: The user types a simple text description of the desired action. This prompt serves as the “director’s notes,” telling the AI whether to focus on a subtle zoom or a more dramatic environmental change.

- Monitor the Processing Status: The system takes over the heavy lifting, utilizing cloud-based GPUs to render the video. This step typically takes around five minutes, during which the status will show as “processing.”

- Final Review and Export: Once the status changes to “Completed,” the user can preview the 5-second MP4 file. If the result aligns with their vision, the video can be downloaded immediately for use across various digital platforms.

Maximizing Engagement Through Dynamic Visual Content for Modern Brands

For brands and marketers, the transition from static to dynamic content is no longer optional. Social media algorithms heavily prioritize video content because it keeps users on the platform longer. A small business that uses AI-generated video for its product showcases can expect significantly higher engagement rates compared to those using only static images. This technology allows for the rapid creation of high-volume content, ensuring that a brand’s feed remains fresh and visually stimulating.

| Media Category | Standard Static Photography | AI Generated Motion Video |

| Attention Retention | Typically under 2 seconds | Maintains focus for 5+ seconds |

| Algorithmic Reach | Standard organic visibility | High priority on Reels and Stories |

| Narrative Impact | Single fixed perspective | Dynamic storytelling through motion |

| Production Time | Instant capture | 5-minute automated generation |

| Production Cost | Minimal to zero | Low cost compared to video shoots |

| Output Compatibility | JPEG, PNG, JPG | MP4 (Universal Support) |

Managing Creative Expectations While Exploring New Generative Video Frontiers

While the results of AI video generation can be breathtaking, it is important to approach the technology with a realistic mindset. In my testing, I have found that the quality of the video is often a direct reflection of the clarity of the input prompt. If a prompt is too vague, the AI might make “creative” choices that do not align with what you had in mind. It is often helpful to try a few different variations of a description to see which one the AI interprets most accurately.

Furthermore, there are inherent limitations to what current generative models can achieve. Because the system is predicting motion based on a single frame, very complex movements or highly detailed textures can sometimes result in minor visual inconsistencies. This is a common characteristic of the current generation of AI tools. However, the ability to generate a professional-looking clip in five minutes far outweighs the occasional need for a second attempt. As the underlying models like Seedance 2.0 continue to improve, these technical hurdles are becoming less frequent.

The Technical Superiority of Integrated High-Performance Video Models

The decision to offer multiple models within a single platform is a major advantage for serious creators. Different models are trained on different datasets, meaning they each have their own “strengths.” Some might be better at cinematic lighting, while others are superior at tracking fast-moving objects. By allowing users to choose or benefit from these varied architectures, the platform ensures that it remains versatile enough for a wide range of use cases, from professional marketing to personal hobbyist projects.

In my observation, the “Veo” series of models integrated into the site provides a very stable and high-definition output that feels modern and clean. This is particularly useful for corporate presentations or high-end product ads where a “polished” look is essential. On the other hand, newer models like Sora 2 offer a glimpse into the future of even more complex generative capabilities, pushing the boundaries of what we thought was possible with simple image-to-video conversion.

Why Motion Graphics are Essential for Success on Social Platforms

The psychology of social media is built on the “stop the scroll” moment. A static photo, no matter how beautiful, is something users have seen millions of times. A video that starts with a subtle, unexpected movement triggers a curiosity response in the brain, making the viewer more likely to stop and engage with the content. This is why even a 5-second loop generated by AI can be more effective than a high-end static advertisement.

By utilizing this technology, social media managers can transform their entire backlog of brand photos into a library of “living” assets. This effectively multiplies the value of their existing photography budget. Instead of paying for a new shoot, they can simply repurpose their best images into fresh video content that performs better in the current digital landscape. This efficiency is what makes AI-driven motion design a game-changer for modern digital strategy.